AI Model Comparison

GPT-4, Claude, Gemini, Llama, Mistral — what each model actually excels at, where it fails, and what it costs. Updated reference table.

The landscape in 2026

The AI model market is no longer a two-horse race. There are now 5+ frontier-class models from different providers, each with genuine strengths. The “best model” depends entirely on your task, budget, and latency requirements.

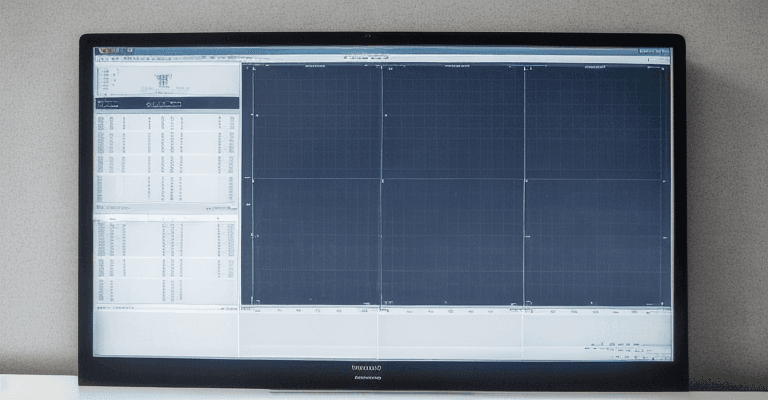

Frontier model comparison

| Model | Provider | Context | Input $/1M | Output $/1M | Best for |

|---|---|---|---|---|---|

| GPT-4o | OpenAI | 128K | $2.50 | $10.00 | General purpose, multimodal, fast |

| Claude Opus 4.6 | Anthropic | 200K-1M | $15.00 | $75.00 | Complex reasoning, long context, code |

| Claude Sonnet 4.6 | Anthropic | 200K | $3.00 | $15.00 | Best quality/cost ratio for most tasks |

| Gemini 2.5 Pro | 1M+ | $1.25 | $10.00 | Massive context, multimodal, Google integration | |

| Llama 4 Maverick | Meta | 1M | Self-hosted | Self-hosted | Open-source, full control, no data sharing |

| Mistral Large | Mistral | 128K | $2.00 | $6.00 | European data residency, multilingual |

What each model actually excels at

GPT-4o (OpenAI)

Strengths: Fastest frontier model. Excellent multimodal (image understanding, audio). Strong at structured output (JSON mode). Massive ecosystem of fine-tuning tools and third-party integrations. Most mature API with function calling, assistants API, and file search.

Weaknesses: Shorter context than competitors (128K vs 200K-1M). Can be confidently wrong (hallucination rate higher than Claude on factual tasks). Fine-tuning is expensive. Data is used for training unless opted out.

Best for: Production applications needing speed + multimodal, prototyping, function calling, chatbots.

Claude Opus 4.6 / Sonnet 4.6 (Anthropic)

Strengths: Best at following complex, nuanced instructions. Lowest hallucination rate on factual tasks. Enormous context window (up to 1M tokens on Opus). Strongest at long-document analysis, code generation, and multi-step reasoning. Constitutional AI training means better safety and honesty defaults.

Weaknesses: Opus is expensive ($15 input, $75 output). Can be overly cautious on edge cases. No native image generation. Smaller third-party ecosystem than OpenAI.

Best for: Code generation, document analysis, research, complex reasoning, long-context tasks, safety-sensitive applications.

Gemini 2.5 Pro (Google)

Strengths: Largest context window in production (1M+ tokens). Native multimodal (text, image, audio, video). Direct integration with Google services (Search, Workspace). Competitive pricing. Strong at coding and math benchmarks.

Weaknesses: API stability historically inconsistent. Outputs can be verbose. Safety filters sometimes overcautious. Less predictable than Claude/GPT-4 for structured output.

Best for: Long-document processing, multimodal tasks involving video, Google Workspace integration, cost-sensitive applications needing large context.

Llama 4 Maverick (Meta)

Strengths: Open-source (can be self-hosted). No per-token cost once deployed. Full data privacy — nothing leaves your servers. Can be fine-tuned without restrictions. 1M context window. Competitive with proprietary models on many benchmarks.

Weaknesses: Requires GPU infrastructure to host (A100/H100). Self-hosted = you manage uptime, scaling, and updates. No built-in safety guardrails — you implement your own. Support is community-based.

Best for: Companies with strict data residency requirements, high-volume applications where API costs are prohibitive, custom fine-tuning needs.

Mistral Large (Mistral)

Strengths: European company (EU data sovereignty). Strong multilingual performance, especially European languages. Competitive pricing. Good structured output. Function calling support.

Weaknesses: Smaller context (128K). Less capable than GPT-4o/Claude on complex reasoning. Smaller ecosystem. Less documentation and community resources.

Best for: EU-based applications, multilingual tasks, GDPR-sensitive workloads.

Cost optimization strategies

-

Route by task complexity. Use Haiku/GPT-4o-mini for classification, extraction, and simple Q&A. Use Sonnet/GPT-4o for generation and analysis. Use Opus only for tasks where quality justifies 5-10× cost.

-

Cache repeated context. If your system prompt is 5,000 tokens and you send 100 requests/hour, that’s 500K tokens/hour wasted on the same text. Use prompt caching (Anthropic) or assistants with persistent context (OpenAI).

-

Batch non-urgent requests. Both Anthropic and OpenAI offer 50% discounts on batch processing (responses within 24 hours instead of real-time).

-

Compress your prompts. Remove filler. Use abbreviations in system prompts. Every token saved on input saves money linearly.

-

Measure output quality, not just cost. A cheaper model that requires 3 retries costs more than an expensive model that gets it right the first time. Track success rate per model per task.

The honest assessment

No model is “best.” GPT-4o is fastest and most ecosystem-rich. Claude is most careful and best at complex instructions. Gemini has the largest context and best multimodal. Llama is the only option for full data control. Mistral is the EU choice.

If you’re building a product: start with Sonnet or GPT-4o (best quality/cost), measure what fails, then route failures to Opus or add few-shot examples. Don’t start with the most expensive model.

Related across the network

Articles in this guide

AI Model Benchmarks That Actually Matter — Beyond MMLU and HumanEval

A practitioner's guide to which AI benchmarks predict real-world performance, how to detect benchmark gaming, and which evaluation metrics correlate with actual user satisfaction.

AI Model Pricing Decoded — Cost Per Million Tokens Across GPT-4o, Claude, Gemini, and Llama

Detailed pricing tables and breakeven analysis for major AI models, with cost-per-call math at different prompt lengths and guidance on when each model is cost-optimal.

Choosing the Right AI Model for Your Task — A Decision Framework

A practical decision matrix mapping task types to model recommendations, with cost-quality tradeoff tables and guidance on when small models outperform large ones.

Context Window Comparison — What 128K, 200K, and 1M Tokens Actually Means for Your Workflow

A practical guide to context window sizes across major AI models, with tokens-to-pages conversion, attention degradation data, and when to use retrieval instead of context stuffing.