The gap between demo and production

Every AI demo works. The challenge is getting from a notebook prototype to a production system that handles 10,000 requests per hour, costs less than $0.02 per call, and fails gracefully when the model hallucinates. That gap — demo to production — is where most AI projects die.

This hub covers the engineering decisions that determine whether an AI system survives production: RAG architecture choices with quality-cost tradeoffs, fine-tuning decision frameworks with breakpoint analysis, embedding model selection with real benchmark data, evaluation frameworks that catch regressions before users do, and cost optimization techniques that cut bills by 60-80% without quality loss.

The AI development stack

| Layer | Decision | Key metric | Common mistake |

|---|---|---|---|

| Model selection | Which model for which task | Quality per dollar | Using one model for everything |

| Retrieval (RAG) | How to ground responses in data | Faithfulness score | Treating RAG as a solved problem |

| Fine-tuning | When to adapt the model itself | Task accuracy vs base model | Fine-tuning when prompting suffices |

| Evaluation | How to measure quality at scale | Correlation with human judgment | Relying on vibes instead of metrics |

| Deployment | How to serve at scale | Latency × cost × reliability | Over-engineering before validating demand |

| Observability | How to monitor in production | Drift detection, error rate | Logging everything, alerting nothing |

Why this hub exists

Most AI development content falls into two categories: vendor documentation (optimized to sell their platform) and academic papers (optimized for novelty, not deployment). The practitioner working at 2 AM trying to figure out why their RAG system returns irrelevant chunks needs neither. They need decision frameworks with real numbers.

Every article in this hub provides: a decision matrix with cost breakpoints, a comparison table with honest tradeoffs, and a “when to use what” framework grounded in production data rather than benchmarks.

Related across the network

Articles in this guide

AI Agent Design Patterns — Tool Use, Planning, and Memory Architectures

Agent architecture decision matrix comparing ReAct, Plan-and-Execute, and Tree-of-Thought with tool integration patterns, memory systems, and failure mode analysis for production agent systems.

AI API Integration Patterns — Direct Call vs Streaming vs Batch Processing

Latency, cost, and complexity comparison across AI API integration patterns with architecture decision matrix, failure handling strategies, and production throughput data.

AI Cost Optimization in Production — Techniques That Cut Spend by 60-80%

Cost reduction technique comparison with percentage savings, implementation effort, and quality impact data across model routing, caching, prompt compression, and architectural patterns.

AI Evaluation Frameworks — Test Suites That Catch Regressions Before Users Do

Metric selection matrix by task type, evaluation framework comparison across RAGAS, DeepEval, and custom suites, with regression detection architecture and production monitoring patterns.

AI Feature Flagging — Gradual Rollout, A/B Testing, and Safe Deployment Patterns

Rollout strategy decision tree for AI features with risk-speed tradeoff analysis, A/B testing methodology for LLM outputs, and feature flag architecture patterns for model swaps and prompt changes.

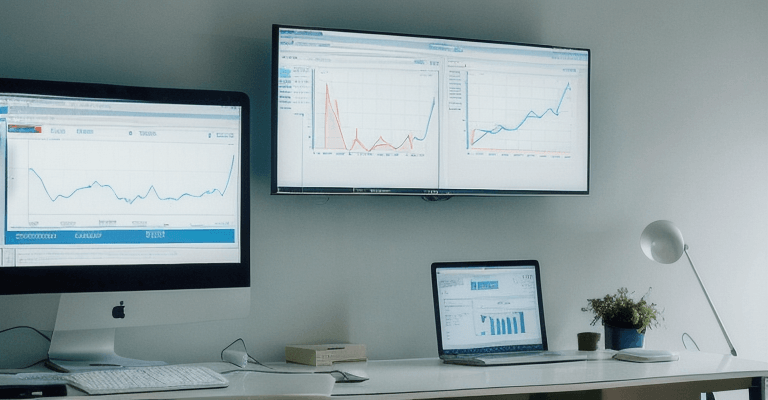

AI Observability in Production — What to Measure, When to Alert, and What to Ignore

Dashboard metric priority matrix covering alert vs log vs ignore decisions, LLM monitoring platform comparison, drift detection methodology, and cost-effective observability architecture.

Embedding Models Compared — Dimensions, Speed, Cost, and Retrieval Quality

20+ embedding models compared across 6 dimensions with MTEB benchmarks, domain-specific performance data, dimensionality analysis, and a selection decision framework.

Fine-Tuning vs Prompt Engineering — The Decision Framework with Cost Breakpoints

When fine-tuning beats prompting and when it doesn't, with quality threshold data, cost breakpoint analysis across training and inference, and a decision tree for production AI systems.

RAG Architecture — Prototype to Production in Three Stages

Architecture comparison across naive, advanced, and modular RAG with retrieval quality metrics, chunking strategy performance data, and production scaling patterns.

Vector Database Comparison — Pinecone, Weaviate, Chroma, Qdrant, and 4 More

8 vector databases compared across 10 dimensions including query latency at scale, pricing models, filtering capabilities, and operational complexity with a selection decision matrix.