Why AI safety is an engineering problem, not a compliance checkbox

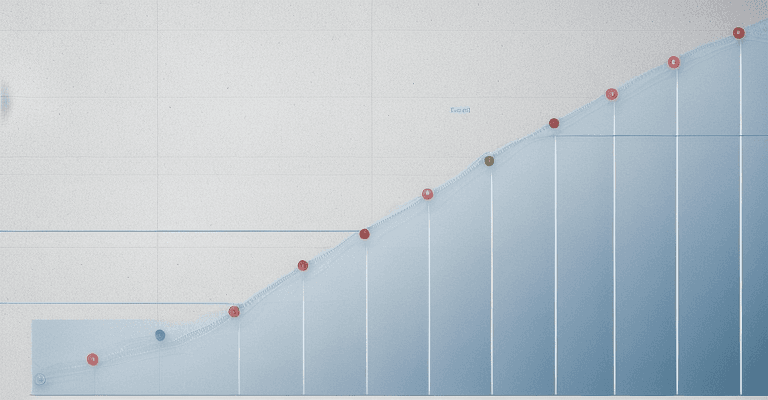

Every production AI system hallucinates. The hallucination rate varies from 3% to 27% depending on the model, task type, and retrieval architecture. The question is never “does my model hallucinate?” — it’s “at what rate, on which inputs, and what’s the cost of each failure?”

This hub covers the practitioner’s side of AI safety: detection methods with real accuracy numbers, bias metrics you can actually measure, guardrail architectures that block harmful outputs without destroying usefulness, and deployment checklists grounded in regulatory requirements (EU AI Act, NIST AI RMF, ISO 42001) rather than aspirational principles.

The safety stack

AI safety in production has four layers, each with different tools and failure modes:

| Layer | What it catches | Tools | Failure mode |

|---|---|---|---|

| Input filtering | Prompt injection, jailbreaks, adversarial inputs | Input classifiers, regex, embedding similarity | False positives block legitimate users |

| Model-level safety | Harmful generation, bias amplification, hallucination | RLHF, constitutional AI, safety fine-tuning | Overcautious refusals, subtle bias leakage |

| Output validation | Factual errors, format violations, policy breaches | RAG faithfulness checks, rule engines, human review | Latency cost, reviewer fatigue, edge case gaps |

| Monitoring | Drift, emergent behavior, adversarial adaptation | Log analysis, anomaly detection, red team exercises | Alert fatigue, evolving attack vectors |

No single layer is sufficient. Production AI safety is defense in depth — and each layer has a cost in latency, false positive rate, and engineering complexity.

The regulatory landscape is not optional

The EU AI Act enforcement begins in 2026. High-risk AI systems now require documented risk assessments, bias testing, human oversight mechanisms, and transparency disclosures. Non-compliance penalties reach 7% of global revenue.

NIST AI Risk Management Framework and ISO 42001 are voluntary but increasingly expected by enterprise customers and investors. If you’re deploying AI to external users, these frameworks define the minimum expectations.

This hub provides the decision frameworks, threshold tables, and audit checklists that turn regulatory requirements into engineering tasks.

What this hub does not cover

This hub does not cover AI ethics philosophy, AI alignment research, or existential risk debates. Those are important topics — but not what a practitioner needs when they’re shipping a model to production next quarter. Every article here answers a specific engineering question with data tables, decision matrices, and measurable thresholds.

Related across the network

Articles in this guide

AI Bias Detection — Demographic Parity, Equal Opportunity, Calibration, and When Each Metric Applies

Fairness metric decision tree per use case, measurement methodology, regulatory requirements, and practical implementation for production AI systems.

AI Content Filtering — Guardrails That Block Without Breaking User Experience

False positive and negative rate comparison across filtering approaches, latency impact, implementation patterns, and the tradeoff between safety and usability.

Types of AI Hallucinations — Factual, Logical, Attribution, and How to Detect Each

Taxonomy of AI hallucination types with detection methods, failure rates by model and task, and a diagnostic decision tree for production systems.

AI Model Audit Guide — Pre-Deployment Testing for EU AI Act, NIST, and ISO 42001

Regulatory requirement mapping across EU AI Act, NIST AI RMF, and ISO 42001 with audit checklist, documentation templates, and compliance evidence collection methodology.

AI Transparency and Explainability — SHAP, LIME, Attention, and When Each Method Works

Explainability method comparison by use case, computational cost, faithfulness to model behavior, and regulatory requirements for AI decision explanation.

Hallucination Detection Methods — RAG Faithfulness, Semantic Similarity, and Production Pipelines

Comparison of hallucination detection tools (RAGAS, DeepEval, Galileo, TruLens) with accuracy, cost, and latency data for production deployment.

LLM Safety Testing — Red Teaming, Adversarial Prompts, and Systematic Attack Taxonomies

Attack vector taxonomy with mitigation effectiveness per vector, red team methodology, and a structured approach to finding vulnerabilities before your users do.

Model Evaluation Beyond Benchmarks — Why MMLU Doesn't Predict Production Performance

Benchmark-to-production correlation data showing divergence, task-specific evaluation methodology, and a framework for building evaluations that predict real-world quality.

Prompt Injection Defense — Attack Classification, Sanitization Patterns, and Defense Effectiveness Rates

Injection attack taxonomy with defense effectiveness rates per attack category, implementation patterns for input sanitization, and layered defense architecture.

Responsible AI Deployment Checklist — 40 Points from Prototype to Production

Pre-deployment checklist with pass/fail criteria covering safety testing, bias audits, monitoring, documentation, and regulatory compliance for production AI systems.